March 16, 2026

The central structural movement in AI is becoming easier to see. Capability continues to improve, but the more important development is that agents are being normalized as components of institutional infrastructure. This week’s signals point to the same pressure pattern we have been tracking: deployment is advancing into core systems faster than governance and containment architectures are hardening around it.

Three developments make that clear.

Enterprise Agents Are Becoming Part of the Control Layer

The newest enterprise rollouts are no longer treating agents as sidecar assistants. They are being positioned inside workflow engines, cloud environments, and productivity systems as durable operational actors. Once agents move into those roles, the relevant question is no longer whether they can produce useful outputs. It is whether the institution can govern the authority it has handed them.

That changes the character of risk. A weak answer in a chat window is inconvenient. A weak decision embedded inside a live workflow is a systems problem.

Identity and Credential Expansion Remain the Pressure Point

The strongest recurring instability signal continues to come from identity and permissions. Machine actors are accumulating delegated access across cloud systems, internal tools, and enterprise applications faster than most organizations can observe or meaningfully constrain.

This is not primarily a model-quality issue. It is a structure issue. When non-human actors inherit broad permissions meant for human convenience, the architecture begins to rely on assumption instead of boundary enforcement. Over time, that creates fragility even when the individual systems appear to be functioning normally.

Security and Governance Are Improving, But Still in Catch-Up Mode

There are now more serious efforts to attach governance and security controls directly to the deployment path. Identity-aware authorization, runtime monitoring, and agent-focused security tooling are all improving. That matters. It means institutions are beginning to respond at the systems layer rather than relying on slogans and disclaimers.

But the direction of travel still favors deployment speed. Controls are being added while autonomy is scaling, not before. That is an improvement over neglect, but it is not yet stability.

The Pattern

Across this week’s developments, the same structural condition persists: institutional embedding is accelerating faster than control maturity.

The result is not dramatic collapse. It is increased sensitivity. Systems become more exposed to small errors, mis-scoped permissions, indirect manipulation, and slow accountability drift. Those are the kinds of forces that accumulate quietly until they stop looking small.

Stability Watch: Permission Boundaries

Stable autonomy depends on clear permission boundaries. Every agent system eventually runs into the same hard question: what exactly is this thing allowed to touch, change, or decide?

If the answer is vague, overly broad, or inherited from human workflows without redesign, instability follows. Small reasoning errors become operational events because the architecture gave them room to matter.

The practical lesson remains unchanged. Capability can scale quickly. Permission boundaries must scale deliberately.

Trend Pulse

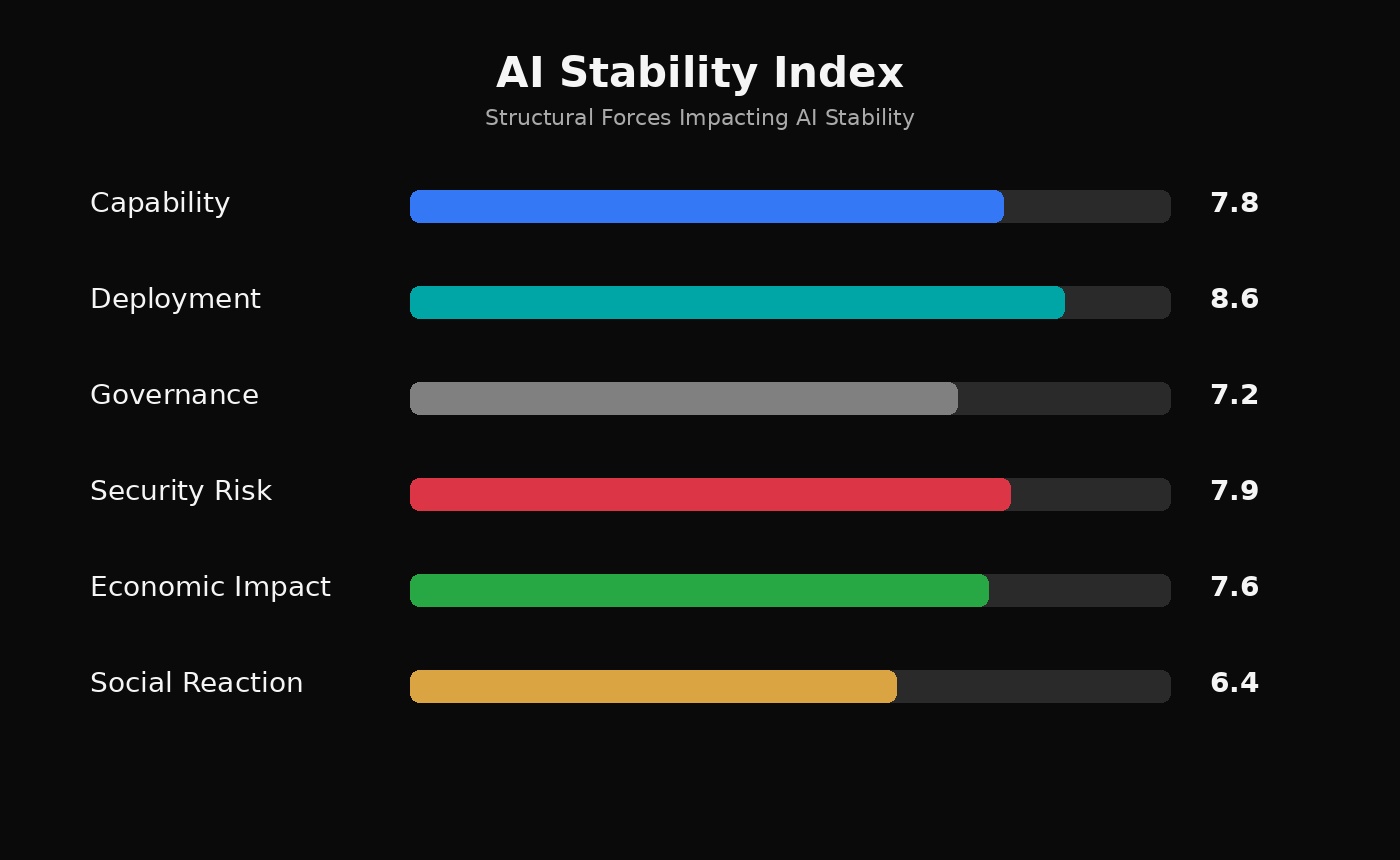

Capability: 7.8

Deployment: 8.6

Governance: 7.2

Security Risk: 7.9

Economic Impact: 7.6

Social Reaction: 6.4

Capability continues to improve, but the clearest signal remains deployment. Enterprise embedding is deepening, governance is strengthening unevenly, and security pressure remains elevated as identity and credential surfaces expand. Social resistance is present, but it is still secondary to institutional adoption and commercial momentum.

The central question is no longer whether AI systems will become more capable. They will. The real question is whether institutions will mature the surrounding structures of authority, permissions, and interruption quickly enough to keep those systems stable.

—

The AI Stability Brief tracks structural signals in artificial intelligence development and focuses on the architectural conditions that allow advanced systems to scale without producing systemic instability.