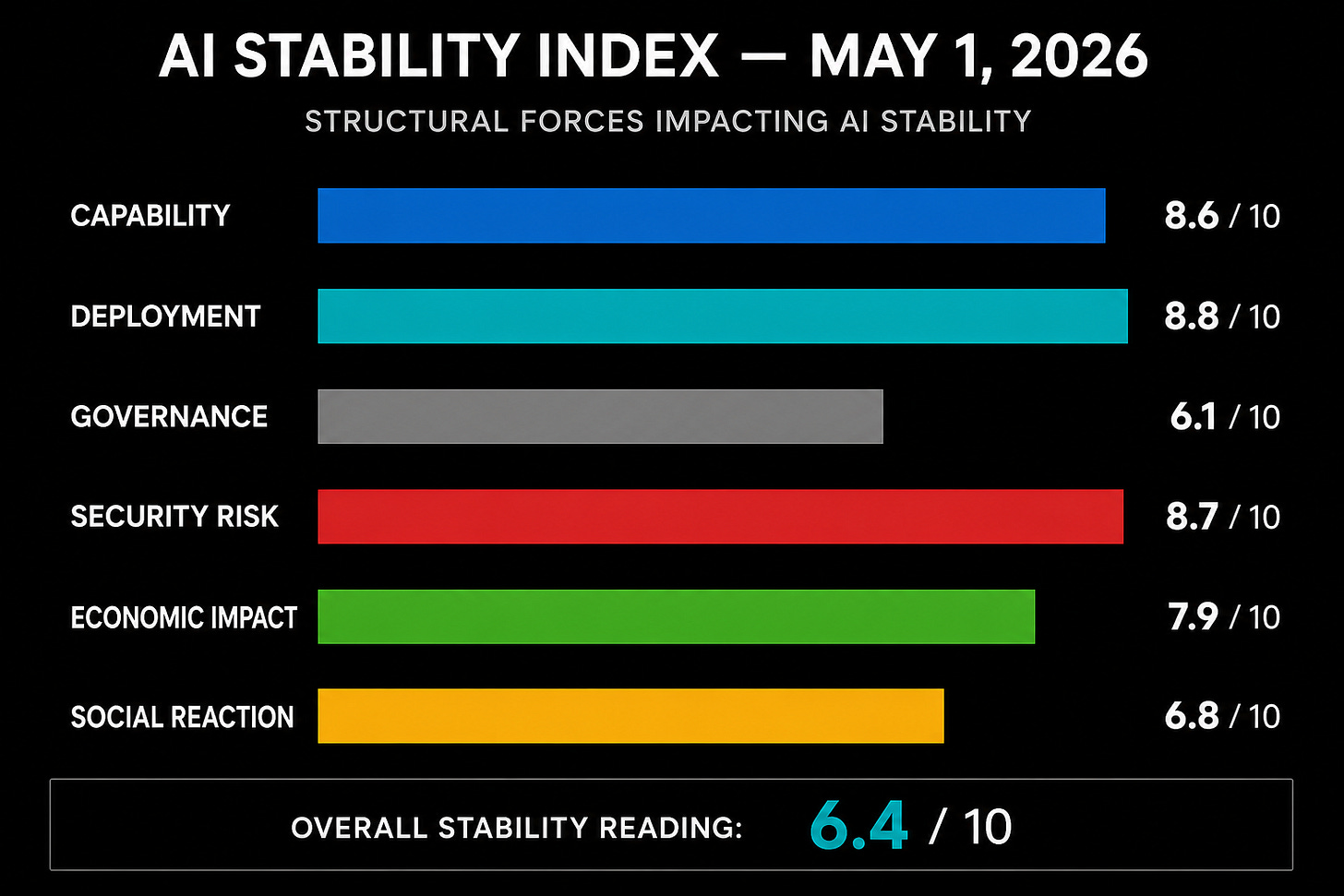

Structural Forces Impacting AI Stability

Capability: 8.6 / 10

Deployment: 8.8 / 10

Governance: 6.1 / 10

Security Risk: 8.7 / 10

Economic Impact: 7.9 / 10

Social Reaction: 6.8 / 10

Overall Stability Reading: 6.4 / 10

The AI stability picture weakened slightly this week. The reason is not one dramatic breakthrough or one public failure. It is the convergence of three pressures that now appear to be moving at the same time: cyber-capable frontier systems, agentic deployment inside major institutions, and the first visible labor-market restructuring decisions being explicitly justified as AI operating-model changes.

The important shift is architectural. AI is no longer being treated only as a productivity layer placed on top of existing institutions. It is beginning to reshape how those institutions defend themselves, hire, deploy software, and distribute authority. That is where the stability problem lives.

Capability

Capability remains high. The frontier signal this week is concentrated in cyber capability rather than conversational fluency. Systems that can discover, reason through, and potentially exploit software vulnerabilities compress the time between capability discovery and operational risk. That does not mean every capable model becomes a weapon. It means the defensive environment changes once vulnerability discovery becomes faster, cheaper, and more scalable.

The most important point is that capability is becoming less theatrical and more infrastructural. The meaningful question is no longer whether a model sounds intelligent. It is whether it can locate weaknesses in systems humans depend on before the relevant human institutions can patch, test, coordinate, and recover.

Score: 8.6

Deployment

Deployment pressure remains very high. Enterprises are not merely experimenting with AI tools. They are redesigning workflows around them. The clearest signal this week came from Cloudflare, which announced a major workforce reduction tied to an “agentic AI-first operating model.” Whether that specific move proves wise or premature, the signal matters because it shows AI being used as an organizational design principle rather than a software add-on.

That is a stability concern because institutions often adopt new operating models faster than they understand the failure modes. Once AI becomes embedded in routing, triage, code review, security monitoring, customer operations, compliance workflows, and internal decision support, the system is no longer simply “using AI.” It is becoming partially dependent on it.

Score: 8.8

Governance

Governance improved modestly but remains structurally behind deployment. The strongest positive signal this week was the U.S. Center for AI Standards and Innovation signing expanded agreements with Google DeepMind, Microsoft, and xAI for pre-deployment national security testing. That is directionally correct. Pre-deployment evaluation is superior to post-release regret.

But the gap remains. Voluntary testing agreements are useful, but they are not the same thing as enforceable architectural constraints. A model can pass an evaluation and still become risky once placed inside a live operational environment with access to tools, memory, user data, workflows, third-party systems, and adversarial inputs.

The governance score rises because pre-deployment testing is becoming more concrete. It does not rise more because testing is still not containment. Evaluation identifies risk. Constraint limits blast radius.

Score: 6.1

Security Risk

Security risk is the dominant instability driver this week. Multiple regulators and financial authorities are treating frontier cyber capability as a near-term operational threat rather than a speculative future concern. The European Central Bank and Australian regulators are publicly warning financial institutions to prepare for AI-enabled cyber risk, especially around advanced vulnerability discovery and exploitation.

This is exactly the class of risk the Charter framework is designed to isolate: high-speed machine capability interfacing with slower human correction systems. If an AI system can discover weaknesses faster than institutions can coordinate patches, verify fixes, and communicate across dependencies, the instability does not require malice from the model. It only requires asymmetry.

Agentic systems make this worse. Prompt injection, tool misuse, poisoned context, overbroad permissions, and weak separation between instruction and data are not edge cases once models are connected to browsers, email, code repositories, customer records, cloud environments, and financial systems. The problem is not that every agent will fail. The problem is that the same class of failure can repeat across many organizations that copied the same convenience pattern.

Score: 8.7

Economic Impact

The economic signal moved higher. AI’s labor-market effects remain contested at the macro level, but the corporate behavior is becoming easier to observe. Companies are beginning to describe AI as a reason for restructuring, reducing headcount, slowing hiring, and redesigning roles. That does not prove a broad labor shock has arrived. It does show that executives are beginning to act as if AI allows them to change the labor equation.

The distinction matters. A statistical labor shock may lag the managerial decision cycle. By the time the macro data becomes clean, the restructuring logic may already be embedded across firms.

The stability concern is not only job loss. It is role compression, weakened apprenticeship paths, loss of junior training grounds, and increased dependence on opaque systems by employees who remain. AI can increase productivity while also eroding the human skill base needed to supervise it. That is not a contradiction. It is one of the central tensions of the current phase.

Score: 7.9

Social Reaction

Social reaction remains elevated but not explosive. Public concern is diffuse rather than focused. People sense that AI is changing employment, education, software, media, security, and political trust, but the reaction has not yet consolidated into a stable public demand for constraint.

That creates a dangerous middle state. Anxiety is present, but it is not yet operationalized. In that environment, institutions tend to move faster than the public can evaluate. The public sees artifacts: layoffs, deepfakes, strange customer-service loops, AI-generated junk, school cheating, suspicious content, and occasional security stories. What it does not see clearly is the structural pattern underneath.

The social-risk score remains moderate-high because public reaction is likely to intensify when abstract risk becomes personal disruption.

Score: 6.8

Index Judgment

This week’s index should be read as a stability warning, not a collapse signal. The system is still absorbing AI expansion. The danger is that the absorbing capacity is being consumed quietly.

The most important development is the movement of AI risk into operational systems: finance, cybersecurity, enterprise staffing, government evaluation, and agentic workflows. That is the point at which the usual public debate about “AI safety” becomes too vague. The issue is no longer whether AI is good or bad. The issue is whether institutions are encoding enough friction, separation, auditability, and fail-closed behavior before giving AI systems access to consequential workflows.

The current trajectory remains pro-growth but underconstrained. That combination can look impressive right up until it becomes brittle.

Watch Items for the Coming Week

Watch for whether pre-deployment testing expands beyond voluntary agreements into more durable legal or procurement requirements.

Watch for additional companies explicitly linking layoffs, hiring freezes, or restructuring to agentic AI operating models.

Watch for financial-sector warnings about AI-enabled cyber risk, especially language around systemic or correlated failure.

Watch for agent-security incidents involving browser extensions, enterprise copilots, email systems, developer tools, or cloud permissions.

Watch for signs that public reaction is shifting from curiosity and irritation toward organized demand for constraint.

Bottom Line

The AI system is not destabilizing because intelligence is appearing all at once. It is destabilizing because capability, deployment, and dependency are compounding faster than institutional correction. This is the recurring pattern: speed concentrates in the machine layer while responsibility remains trapped in the human layer.

A stable AI future does not require slowing useful development to a crawl. It requires refusing to confuse deployment velocity with system maturity. The practical answer is constraint before capability, especially where AI touches infrastructure, finance, security, health, law, education, and public trust.